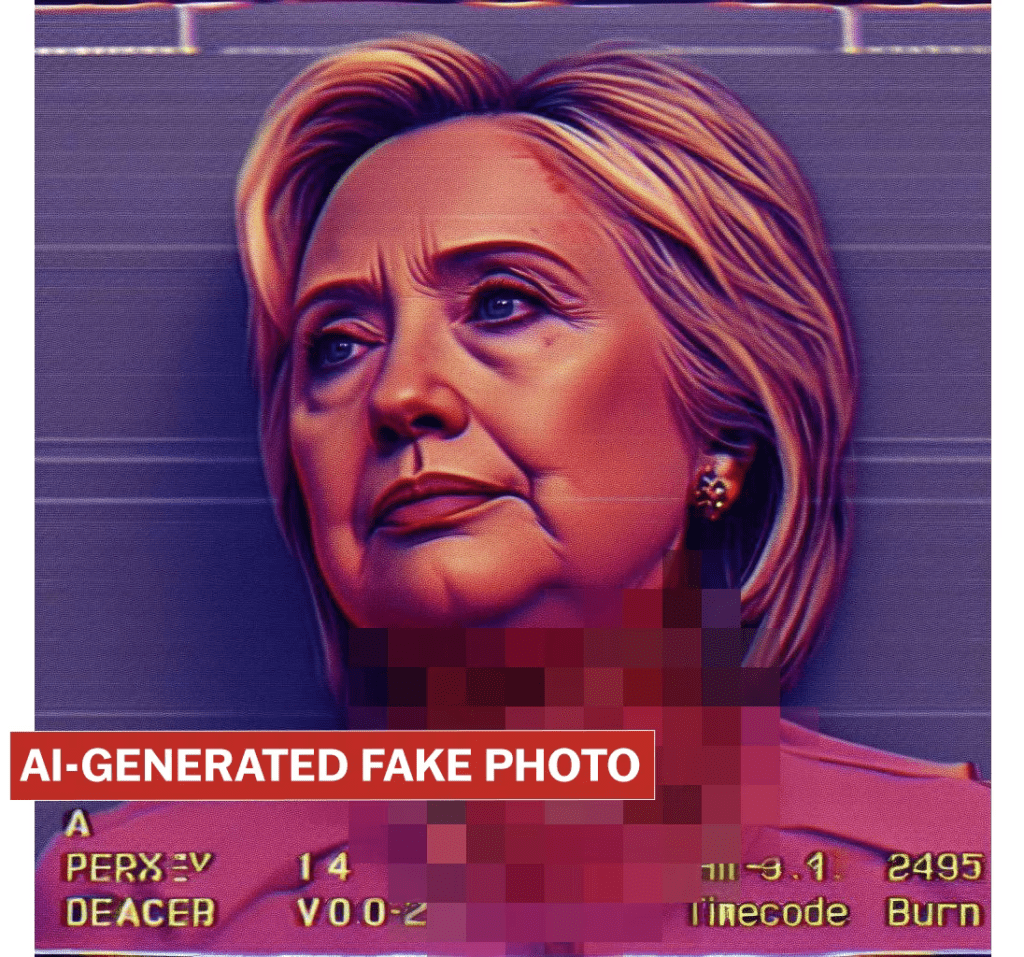

- Microsoft's AI, integrated into Bing and Windows Paint, generates violent images, including those of high-profile figures.

- Despite claims of safety, Microsoft's AI still produces disturbing content.

- The incident highlights the necessity for tech companies to anticipate and address potential misuses of AI.

Warning

The news includes horrifying AI-generated images that have been blurred out, but some readers still may find them disturbing.

December 29, 2023: Microsoft’s AI, known for its integration into tools like Bing and Windows Paint, has recently been in the spotlight for generating alarmingly violent images.

These include graphic depictions of public figures such as Joe Biden, Donald Trump, Hillary Clinton, and Pope Francis, sparking widespread concern over the safety of AI technology.

Microsoft has stated that the AI, which uses DALL-E 3 technology from OpenAI, is safe. However, recent findings contradict this claim, revealing that AI can produce images with extreme violence against various groups and individuals.

This has raised questions about Microsoft’s responsibility and the effectiveness of its safety measures.

The controversy brings to the forefront the growing ubiquity of AI in everyday technology and the challenges in ensuring its safe use.

With AI’s potential for misuse, particularly in creating ‘deepfake‘ images, there’s an urgent need for tech companies to take proactive steps in safeguarding their AI systems.

This issue is especially crucial for Microsoft, a key player in shaping AI’s future, and its response to this situation will be closely watched.

The incident serves as a reminder of the importance of responsible AI development and deployment, particularly as we approach sensitive periods like general elections.

While AI technology offers tremendous benefits, it also presents significant risks that require diligent management and oversight.

Microsoft’s current situation underscores the necessity for robust safety mechanisms and ethical considerations in the rapidly evolving world of AI.