In a world where seeing is no longer believing, Deepfakes have grabbed headlines, shocking people everywhere. Imagine a video on your phone showing a famous star in a place they never were.

That happened recently with a video that looked just like Rashmika Mandanna, a big movie star, but it was all fake, a Deepfake. These tricky videos are made with clever computer tricks, and they’re getting so good that it’s hard to tell real from fake.

Not only this, deepfakes are hitting the headlines, with even Hollywood stars caught up in the mix. Gayle King, a famous journalist, had to tell her fans that a video showing her promoting a weight loss product was a lie—it was a deepfake.

Tom Hanks, a big-time movie actor, also had to speak up about a fake video of him selling a dental plan. Mr. Beast, a big name on YouTube, found a scam ad using his face to fake a phone giveaway.

Deepfakes are not just about making fake videos of stars. There are more types, such as audio Deepfakes, photo deepfakes, and deepfake avatars. They can trick people, scam them out of money, and even mess with important things like elections.

But don’t worry; this research blog is here to be your guide.

We’ll show you the latest ways to spot these Deepfakes, how to keep your face safe from being used in a deepfake, and what you can do to fight this technology.

What Are Deepfakes

To understand anything, we must understand the roots of it. So, let’s first start by enlightening us on what this dangerous deepfake AI means.

Deepfakes began from academic research into AI generative models in the 1990s.

Neural networks were trained to reconstruct and modify images, video, and audio. However, these early systems were limited in quality and scope.

The term “deepfake” emerged in 2017 from a Reddit user who shared face-swapped celebrity p*rn videos. They used generative adversarial networks (GANs) – an architecture with two competing neural nets, one generating fakes and the other evaluating realism. This approach resulted in significant improvements in quality.

Soon, easy-to-use apps like FakeApp spread deepfake creation to the public. Amateur communities swapped celebrity faces onto p*rn and memes.

A watershed moment was a 2018 deepfake video of Barack Obama, demonstrating the potential for fabricating speeches. Beyond p*rn, deepfakes were weaponized for disinformation, harassment, and fraud.

But how deepfakes are created? Here’s the trick.

How Image And Video Deepfakes are Created Through Deep Neural Networks

Modern deepfakes leverage deep neural networks, AI modeled on the multilayered neural structure of the human brain.

Each layer processes inputs and passes signals onto the next. Stacking many layers enables highly complex feature extraction and synthesis.

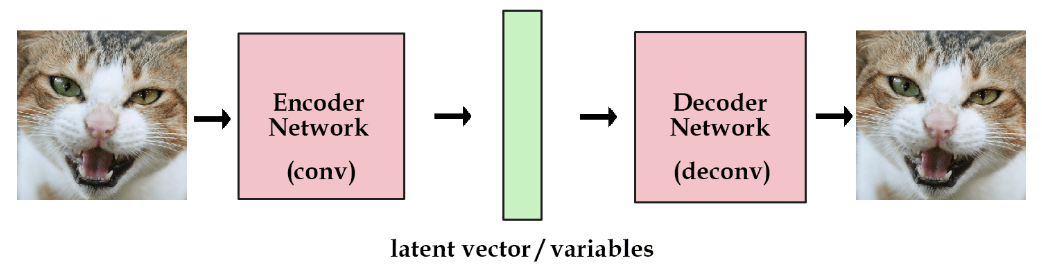

A key technique is an autoencoder, with an encoder compressing data into a compact latent space representation containing core features and a decoder reconstructing the output from this compressed code.

The encoder focuses on posture, lighting, and expressions – information shared between people. The decoder then applies individualized facial features and textures.

Different decoders are trained for each person using many images/videos as reference.

To create a deepfake, the encoder extracts pose and expression from the source media. The decoder overlays the target’s face onto this. A GAN hones realism through an adversarial training loop. The generator refines its fakes to fool the discriminator.

This arms race between the two networks, powered by deep neural architectures and massive datasets, enables deepfakes to achieve such convincing results.

The outputs can mimic facial mannerisms, speech patterns, tone, cadence, laughs, vocabulary, and other characteristics that capture the essence of an individual.

Let’s look at some basic concepts of Deepfakes and how they are created:

Basic Concepts

Deepfakes leverage advanced machine learning techniques, particularly neural networks, to create convincing fake images, videos, or audio recordings.

The two main types of neural networks used in Deepfakes are:

- Autoencoders: These networks consist of two parts – an encoder and a decoder. The encoder compresses an image into a simpler representation (latent space), capturing its essential features. The decoder then reconstructs an image from this compressed form, potentially altering some aspects to create a deepfake.

- Generative Adversarial Networks (GANs): GANs involve two parts – a generator and a discriminator. The generator creates images, while the discriminator evaluates them against real images to determine authenticity. The generator aims to create convincing images that the discriminator can’t distinguish from real ones.

Creation Process

The process of creating a Deepfake involves several steps:

- Data Collection: Gathering a large dataset of images or videos is crucial. These should display a range of expressions and angles for the faces involved.

- Training: The neural network is trained using this data. For an autoencoder, it learns to compress and then reconstruct these images. In the case of GANs, the generator tries to create images that the discriminator can’t differentiate from the real ones.

- Encoding and Decoding: In an autoencoder, the subject’s face is encoded into a latent space and then decoded while superimposing features of another face (e.g., a celebrity), thus creating a blend that looks like the latter but retains some underlying features of the former.

- Iteration and Refinement: The process often requires multiple iterations, with adjustments to improve realism. This might involve fine-tuning the network or altering input data.

- Post-Processing: Even after creating a deepfake, additional editing may be necessary to enhance realism, such as adjusting lighting, colors, and syncing audio.

Well, that’s a very basic process of creating a deepfake or the systems that generate such deepfakes.

There are a lot of tools out there on the web that create very realistic-looking Deepfakes, which we don’t wanna delve into as we don’t want to be a resource talking about how not to be an alcohol addict and then sharing the best alcohol to try out (sorry to disappoint you all).

Now, as we have covered the basics of Deepfake creation and what Deepfakes are, let’s see how you can save yourself from attackers.

How Audio Deepfakes Are Created

Deepfake audio technology, or voice cloning, is a rapidly evolving field that uses artificial intelligence (AI) and machine learning algorithms to create realistic synthetic speech.

This technology can generate audio clips that sound like someone saying things they never actually said.

Definitions

- Deepfake Audio is synthetic media where a person’s voice is replicated to create convincing fake audio clips.

- Voice Cloning involves creating a high-quality replica of a human voice using text-to-speech systems. It’s a process of generating a synthetic voice closely resembling a target voice.

Both deepfake audio and voice cloning use deep learning, a subset of AI that mimics human brain functions, to analyze and process large datasets of voice recordings.

This technology enables the creation of new audio that matches the tone, pitch, and mannerisms of the input voice.

Creation of Audio Deepfakes and Voice Cloning

We’re sorry, but we figured out the exact systems and ways deepfake voice cloning works.

Since we didn’t want to give an AI-made answer, here’s what we know about how these voice cloning systems work:

- Data Collection: Collect extensive voice samples of the target voice.

- Training: Use these samples to train a deep-learning model.

- Generation: The model generates new audio matching the target voice’s characteristics.

If you want more information, check out this post or this one.

How to Protech Yourself from Deepfakes (Audio and Video Scams)

Here are some things to keep in mind so that you don’t have to witness your Deepfakes go viral or someone impersonating you to con your loved ones.

First, we’ll go over several ways to protect yourself from Deepfake videos and images.

1. Be Skeptical and Vigilant

- Always approach online media with a healthy dose of skepticism. Understand that deepfakes exist and are being used in various ways.

- Question the authenticity of unusual or sensational images and videos, especially those that seem out of character for the individuals involved.

2. Educate Yourself and Others

- Learn how deepfakes are made and what they look like. Educating yourself about their characteristics can help you identify potential fakes.

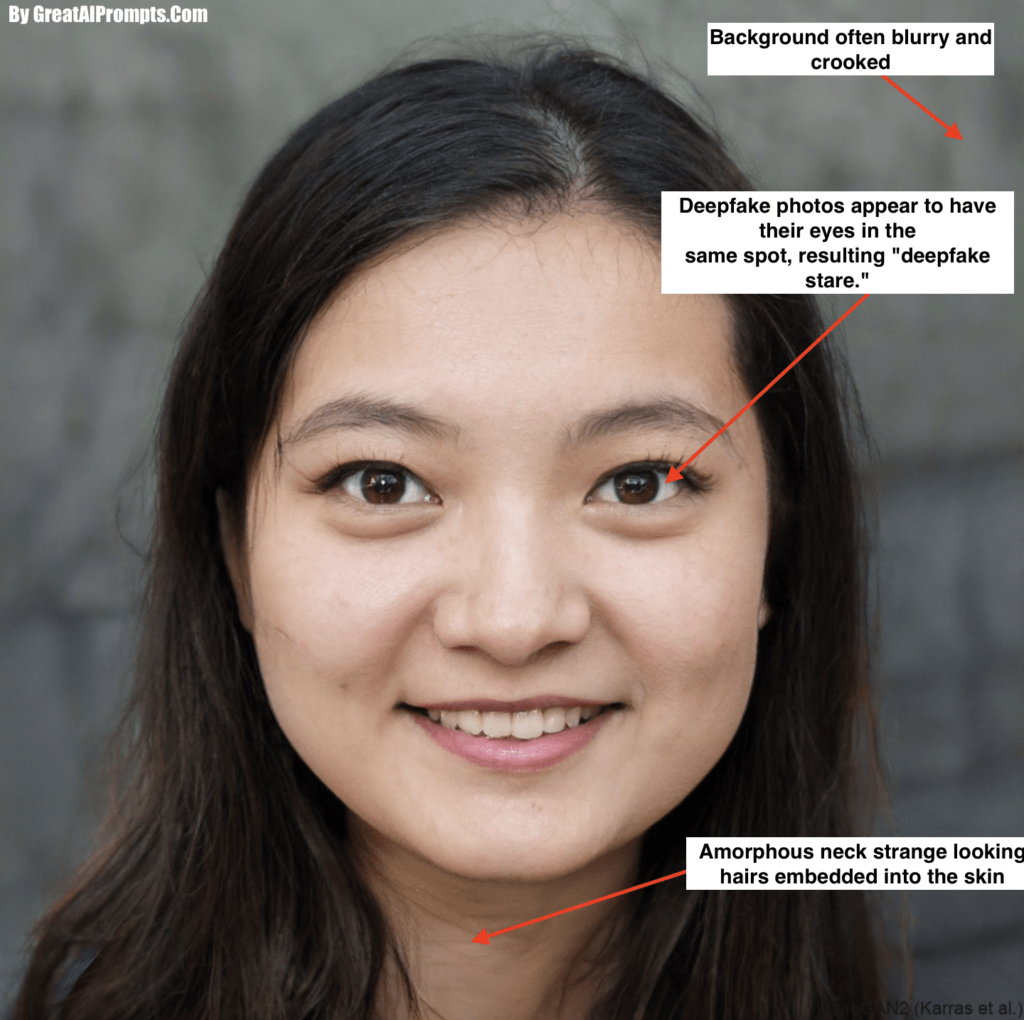

- Share your knowledge with friends and family, especially those who may be more susceptible to online scams. We would suggest you study random images on thispersondoesnotexist.com to educate yourself more about what deepfakes look like.

3. Critical Analysis of Media

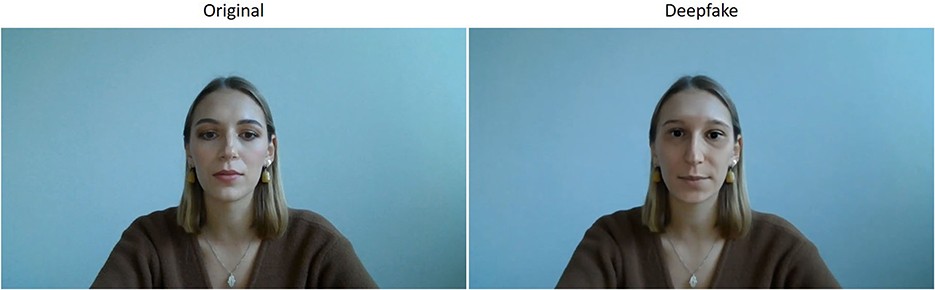

- Look for inconsistencies such as unnatural facial movements, awkward lighting, and shadows, or issues with lip-syncing.

- Pay attention to the context of the video or image. Often, deepfakes are used in implausible or sensational scenarios.

4. Verify Sources

- Cross-check the information with reliable news outlets. If a story is genuine, multiple credible sources will likely report it.

- For videos of public figures, check their official social media accounts or websites for confirmation or denial.

5. Use Technology to Your Advantage

- Utilize deepfake detection tools available online. While not foolproof, they can often help identify manipulated content.

- Regularly update your cybersecurity software to protect against malware that could be used to create or disseminate deepfakes.

6. Be Careful with Personal Information

- Avoid sharing excessive personal information and images online, as they can be used to create deepfakes.

- Review and adjust your social media privacy settings to limit who can view your photos and videos.

7. Legal and Policy Awareness

- Stay informed about the legal framework regarding deepfakes in your region. Some places have laws against creating or distributing deepfakes.

- Support policies and legislation that aim to combat the misuse of deepfake technology.

8. Use Watermarking and Metadata

- Watermark your own images and videos. This can deter misuse and helps in establishing the original source.

- Be aware of metadata in digital files, which can provide information about the origin and authenticity of a file.

9. Seek Professional Advice if Targeted

- If you suspect you’re the target of a deepfake-related scam, seek advice from cybersecurity professionals or law enforcement.

- Report deepfake scams to appropriate authorities to help prevent their spread and to protect others.

10. Encourage Transparency from Tech Companies

- Support initiatives by tech companies to make AI-generated content more transparent, such as adding watermarks or metadata.

- Advocate for greater responsibility and ethical practices among companies that develop AI and deepfake technologies.

11. Maintain a Critical Mindset During Political Campaigns

- Be particularly vigilant during election seasons when deepfakes can be used to mislead or manipulate public opinion.

- Verify political videos and images from multiple independent sources before believing or sharing them.

12. Regularly Update Security Software

- Ensure that the latest security software protects your computer and internet-enabled devices.

- Use strong, unique passwords for your online accounts and change them regularly.

While observing and detecting Deepfakes make sure to observe these things in mind:

- Observation of Cheeks and Forehead: Examine the skin texture in these areas. Does it exhibit an unnatural level of smoothness or excessive wrinkles? Consider whether the skin’s appearance harmonizes with the individual’s hair and eye age. An inconsistency might indicate digital manipulation.

- Analysis of Eyes and Eyebrows: Pay attention to the shadowing around the eyes and eyebrows. Are the shadows consistent with the lighting and natural facial contours? Inconsistencies here can be revealing.

- Examination of Glasses: Look closely at the glasses, if present. Notice the presence and intensity of glare. Does the glare respond logically to the person’s movements, or does it seem static and unnatural?

- Inspection of Facial Hair: Assess the authenticity of any facial hair. Observe whether elements like mustaches, sideburns, or beards appear genuine. In deepfakes, facial hair can be inaccurately added or removed, leading to unusual appearances.

- Verification of Facial Moles: Scrutinize any moles on the face. Are they consistent in appearance with the rest of the skin, or do they seem artificially placed or altered?

- Analysis of Blinking Patterns: Monitor the frequency of blinking. An unnatural rate of blinking, either too frequent or too sparse, can be an indicator of a deepfake.

- Evaluation of Lip Size and Color: Check if the lips’ size and color are in harmony with the overall facial complexion. Discrepancies in these features can signal manipulation.

Each of these points plays a crucial role in identifying potential deepfakes.

How to Protect Yourself from Deepfake Voice Cloning Scams

Protecting yourself from deepfake audio cloning scams is crucial.

Here are some comprehensive techniques and tips to help you safeguard against these threats:

Researchers say technologies like spectrograms can show when voice recordings are fake. But most of us do not have the luxury of a voice analyzer when an attacker calls. Listen for a monotone delivery, odd pitch or emotion, and lack of background noise. Voice fakes can be hard to detect. If you receive an odd call from a legitimate organization, you can verify if the call is real by first hanging up then calling the organization back. Be sure to use a trusted phone number, such as a phone number you already have in your contact list, a phone number printed on a bill or statement from the organization, or the phone number on the organization’s official website.

nashville.gov

- Be Mindful of Unexpected Calls: Exercise caution with unexpected calls, even from familiar contacts. Scammers often use cloned voices of people you know. If you receive a call that seems unusual or suspicious, verify it by contacting the person through a different medium or method.

- Verify Identity in Unusual Requests: If you receive an unusual request from a known contact, especially involving money or sensitive information, verify the caller’s identity. You can do this by asking questions only the real person would know or using a pre-agreed upon code word with family and friends.

- Use of Code Words: Establish a secret code word with family members and close contacts. This word should be something not easily guessed or found on social media. In an unexpected call, asking for this code word can help distinguish a real call from a scam.

- Check for Digital Artifacts and Inconsistencies: On video calls, look for signs like excessive blinking, unnatural head movements, or inconsistencies in the background or lighting that might suggest a deepfake. Ask the person to perform simple actions like turning their head or placing a hand in front of their face, as deepfakes may not replicate these movements accurately.

- Don’t Share Personal Information: Be cautious with your personal identifying information. Scammers can use details like your birth date, phone number, and even pet names to impersonate you or someone you know.

- Question the Authenticity: If you come across a voice recording of an influential person that seems out of character or aligns too perfectly with your beliefs, question its authenticity. Deepfakes can be designed to exploit confirmation biases.

- Insist on Physical Meetings: In cases of online relationships or interactions that seem suspicious, insist on meeting in person. While not always practical, it’s a definitive way to verify authenticity.

- Educate Yourself and Others: Spread awareness about deepfake technology, its capabilities, and its dangers. Education is a powerful tool in combating scams.

- Practice Digital Hygiene: Regularly update your cybersecurity software and practice good digital hygiene, including using strong passwords and being cautious with the links and attachments you open.

The End

While wrapping up our discussion, let’s shift our focus towards the future and the broader implications of this technology.

As we’ve seen, deepfakes have evolved from simple entertainment tools to complex systems capable of influencing public opinion and personal lives. Their applications range from harmless fun to serious concerns like misinformation and identity fraud.

There’s a growing emphasis on developing sophisticated detection methods and legal frameworks to manage these challenges.

By understanding the nature of deepfakes and their capabilities, we can better prepare ourselves to recognize and counteract their potential misuse.

While we welcome these technical advancements, let us also resolve to use them responsibly and with integrity.

Our Favorite Resources to Know More About Deepfakes: